The demo worked. You saw it. Your team saw it. The agent completed the task, produced the output, and everyone in the room nodded.

Then you put it in production, and things started breaking in ways nobody could explain.

Here's what that is — and what it isn't. It's not a bad model. It's not a bad prompt. It's not a skill gap on your team. It's a visibility problem, and your demo was never designed to solve it.

Demos Are Built to Succeed

This isn't an accusation. It's architecture.

Every demo is constructed around a success path. You choose the input. You pick the scenario. You know the outcome you're building toward. The agent runs, it completes, and it looks clean. That's the job of a demo. It shows what's possible. It builds confidence. It gets the green light.

But "possible" and "reliable" are not the same thing.

Demos don't surface edge cases — you don't include them. They don't reveal failure modes — you route around them. They don't show what happens when the data is slightly different, the API is slow, or the user prompt arrives in an unexpected format.

Demos are structurally optimistic, by design. That's what makes them demos. The gap opens the moment you take that optimism into the real world, where none of those conditions are guaranteed.

You didn't build the wrong demo. You built exactly the right demo for what a demo is supposed to do. The problem is that nobody told you a demo is insufficient preparation for production. Those are two different environments with two different jobs, and the second one requires something the first one was never built to provide: observability.

"Did It Complete?" Is the Wrong Question

When something breaks in production, the first question is usually: did the agent complete the task?

That's understandable. Completion is the most visible signal. But it's also the least informative one.

Agents complete tasks that are subtly wrong all the time. They route through the wrong tool, misinterpret an intermediate output, or recover from an error in a way that technically produces a result — but not the right result. From the outside, completion looks like success. Inside the trace, it's a different story.

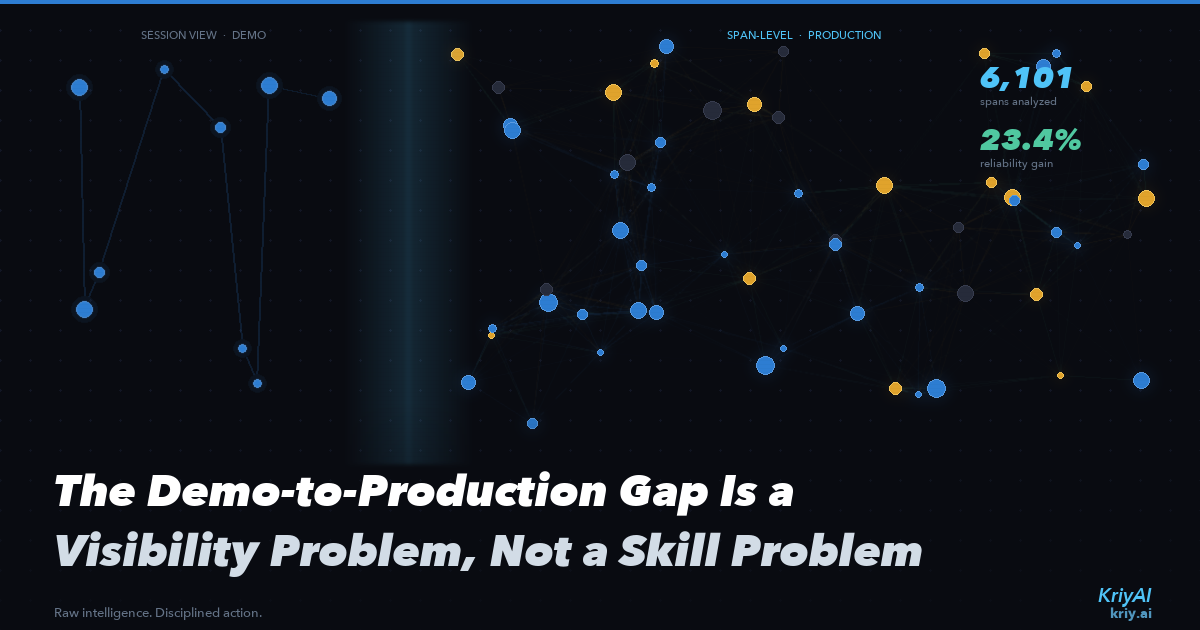

The real question isn't whether the agent completed. It's whether it completed correctly. And to answer that, you need visibility at the span level — not just the session level.

A session tells you the agent ran. Spans tell you what it did at every step: which tools were called, what outputs were returned, where the reasoning drifted, where a recovery was triggered. That's where the signal lives. That's where you find out whether the completion you observed was actually correct.

Without span-level visibility, you're watching the agent from the outside. You're asking "did it finish?" when the real diagnostic question is "did it finish the right way, and how do I know?"

The session view flattens everything into a binary. The span view opens up the middle — which is where almost every real failure lives.

Scale Makes Invisible Patterns Visible

The failure modes that break production agents aren't random. They're patterns. They appear under specific conditions, with specific inputs, at specific points in a workflow. But you can only see them once you have enough sessions to surface them.

One session tells you nothing. A hundred sessions gives you hints. Eight hundred sessions gives you data.

At KriyAI, we analyzed 801 sessions comprising 6,101 spans. Across that dataset, patterns emerged that were completely invisible at the demo stage — not because they were hidden, but because no single session generates enough signal to detect them. Individual runs don't compound. Accumulated span data does.

With that visibility, teams were able to identify and address the actual root causes of their failure modes — not guess at them, not patch around them, but trace them to source. The result: a 23.4% improvement in agent reliability.

That number didn't come from a better model or a rewritten prompt. It came from knowing, at the span level, what was actually happening across a real production workload.

The Reframe

If your production agents are underperforming, the instinct is to look at the model, look at the prompt, look at the team. To treat underperformance as a competence problem — something to be fixed by better engineering or a smarter architecture.

Most of the time, it isn't. It's a visibility problem.

The teams that close the demo-to-production gap aren't doing it by rebuilding the demo. They're doing it by building observability into the production system from the start — instrumenting at the span level, not just the session level. They stop asking "did it complete?" and start asking "what did it do to complete, and was that the right path?"

They treat production as a data collection environment, not just a deployment environment. Every session generates signal. Every span is a data point. Over hundreds of sessions, patterns emerge that no single run could have surfaced.

That's not a philosophical shift. It's an operational one — and it's what separates teams that get stuck chasing production bugs from teams that systematically eliminate them.

What Changes When You Can See

When you have span-level visibility at scale, three things change.

First, you stop guessing. You can see exactly where the agent deviated from the expected path — not inferred from the final output, but observed in the trace.

Second, you stop blaming the model. Most production failures aren't model failures. They're structural failures: tool routing issues, prompt handling edge cases, recovery logic that works in isolation but compounds incorrectly in sequence. Span data makes these visible without ambiguity.

Third, you stop being surprised. You develop a working model of where your agents are reliable and where they're brittle. That's not a concession — it's an engineering advantage. You can make better decisions about where to deploy, what to monitor, and what to fix next.

The Demo Is Not the Ceiling

Production performance is not bounded by demo performance. It's bounded by visibility into what's actually happening.

The teams improving reliability by double digits aren't running better demos. They're watching span data across 801 sessions and acting on what they see.

The demo was never the problem. The absence of instrumentation after the demo — that's the gap. And visibility is how you close it.